Mattew M.

See all reviews

Start your AI Engineer career journey: Master Transformer Architecture and the Essentials of Modern AI

Skill level:

Duration:

CPE credits:

Accredited

Bringing real-world expertise from leading global companies

Master's degree, Social Research Methods & Statistics

Description

Curriculum

Free lessons

1.1 Introduction to the course

2 min

1.2 Course Materials and Notebooks

1 min

1.3 What are LLMs?

3 min

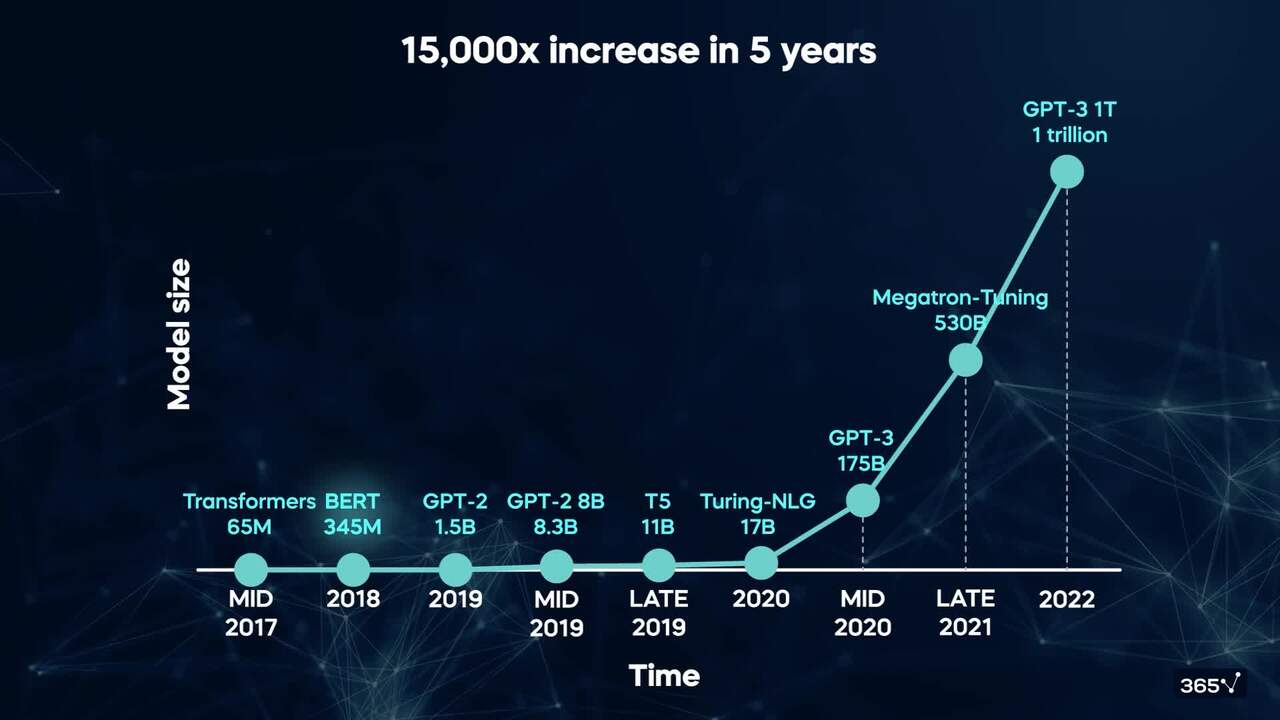

1.4 How large is an LLM?

3 min

1.5 General purpose models

1 min

1.6 Pre-training and fine tuning

3 min

96%

of our students recommend

#1 most reviewed

94%

of AI and data science graduates

successfully change

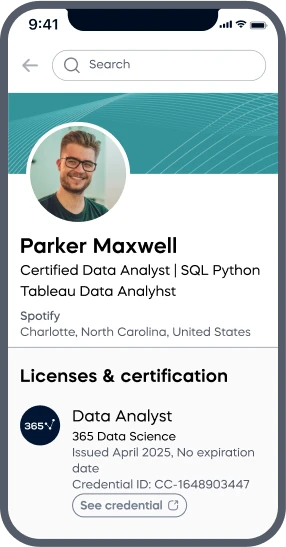

ACCREDITED certificates

Craft a resume and LinkedIn profile you’re proud of—featuring certificates recognized by leading global

institutions.

Earn CPE-accredited credentials that showcase your dedication, growth, and essential skills—the qualities

employers value most.

Certificates are included with the Self-study learning plan.

How it WORKS