Is Astronomy data science?

Machine learning in Astronomy – sure it sounds like an oxymoron, but is that really the case? Machine learning is one of the newest ‘sciences’, while astronomy - one of the oldest. In fact, Astronomy developed naturally as people realized that studying the stars is not only fascinating, but it can also help them in their everyday life. For example, investigating the star cycles helped creating calendars (such as the Maya and the Proto-Bulgarian calendar). Moreover, it played a crucial role in navigation and orientation. A particularly important early development was the use of mathematical, geometrical and other scientific techniques to analyze the observed data. It started with the Babylonians, who laid the foundations for the astronomical traditions that would be later maintained in many other civilizations. Since then, data analysis has played a central role in astronomy.

So, after millennia of refining techniques for data analysis, you would think that no dataset could present a problem to astronomers anymore, right?

Well… that's not entirely true. The main problem that astronomers face now is… as strange as it may sound… the advances in technology.

Wait, what?! How can better technology be a problem? It most certainly can. Because what I mean by better technology is a bigger field of view (FOV) of the telescopes and higher resolution of the detectors. Those factors combined indicate that today’s telescopes gather a great deal more data than previous generation tech. And that suggests astronomers must deal with volumes of data they've never seen before.

How was the Galaxy Zoo Project born?

In 2007, Kevin Schawinski found himself in that kind of situation.

As an astrophysicist at Oxford University, one of his tasks was to classify 900,000 images of galaxies gathered by the Sloan Digital Sky Survey for a period of 7 years. He had to look at every single image and note whether the galaxy was elliptical or spiral and if it was spinning. The task seems like a pretty trivial one. However, the huge amount of data made it almost impossible. Why? Because, according to estimations, one person had to work 24/7 for 3-5 years in order to complete it! Talking about huge workload! So, after working for a week, Schawinski and his colleague Chris Lintott decided there had to be a better way to do this.

That is how Galaxy Zoo – a citizen science project – was born. If you’re hearing it for the first time, citizen science means that the public participates in professional scientific research. Basically, the idea of Schawinski and Lintott was to distribute the images online and recruit volunteers to help out and label the galaxies. And that is possible because the task of identifying the galaxy as elliptic or spiral is pretty straightforward.

Initially, they hoped for 20,000 – 30,000 people to contribute.

However, much to their surprise, more than 150,000 people volunteered for the project and the images were classified in about 2 years. Galaxy Zoo was a success and more projects followed, such as Galaxy Zoo Supernovae and Galaxy Zoo Hubble. In fact, there are several active projects to this day.

Using thousands of volunteers to analyze data may seem like a success but it also shows how much trouble we are in right now. 150,000 people in the space of 2 years managed to just classify (and not even perform complex analysis on) data gathered from just 1 telescope! And now we are building a hundred, even a thousand times more powerful telescopes. That said, in a couple of years’ time volunteers won’t be enough to analyze the huge amounts of data we receive.

To quantify this, the rule of thumb in astronomy is that the information we collect is doubling every year. As an example, The Hubble Telescope operating since 1990 gathers around 20GB of data per week. And the Large Synoptic Survey Telescope (LSST), scheduled for early 2020, is expected to gather more the 30 terabytes of data every night.

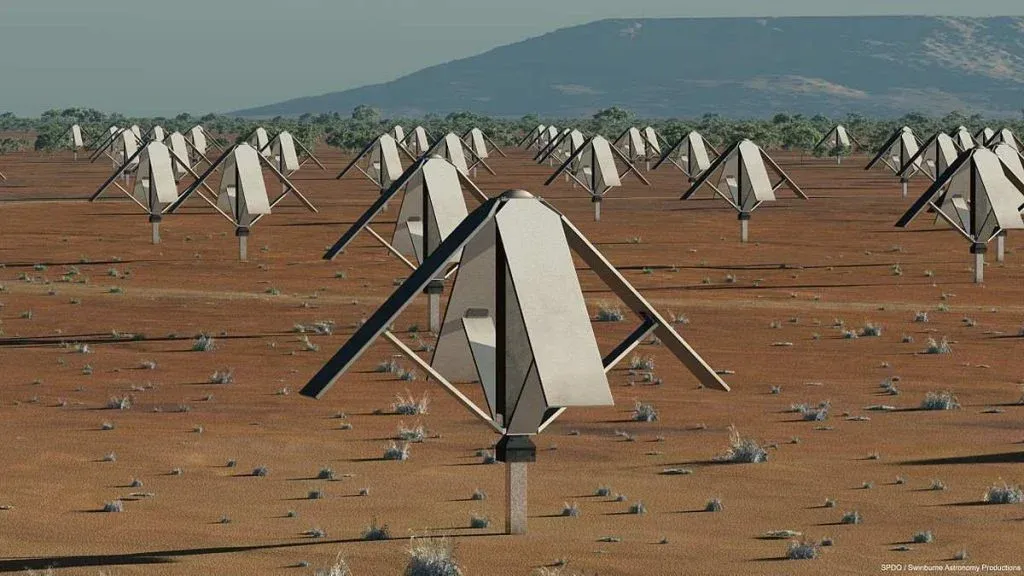

But that is nothing compared to the most ambitious project in astronomy – the Square Kilometre Array (SKA). SKA is an intergovernmental radio telescope to be built in Australia and South Africa with projected completion around 2024. With its 2000 radio dishes and 2 million low-frequency antennas, it is expected to produce more than 1 exabyte per day. That’s more than the entire internet for a whole year, produced in just one day!

Wow, can you imagine that!?

With that in mind, it is clear that this monstrous amount of data won’t be analyzed by online volunteers. Therefore, researchers are now recruiting a different kind of assistants – machines.

Why is everyone talking about Machine Learning?

Big data, machines, new knowledge… you know where we’re going, right?

Machine learning.

Well, it turns out that machine learning in astronomy is a thing, too. Why?

First of all, machine learning can process data much faster than other techniques. But it can also analyze that data for us without our instructions on how to do it. This is extremely important, as machine learning can grasp things we don’t even know how to do yet and recognize unexpected patterns. For instance, it may distinguish different types of galaxies before we even know they exist.

This brings us to the idea that machine learning is also less biased than us humans, and thus, more reliable in its analysis. For example, we may think there are 3 types of galaxies out there, but to a machine, they may well look like 5 distinct ones. And that will definitely improve our modest understanding of the universe.

No matter how intriguing these issues are, the real strength of machine learning is not restricted to just solving classification issues. In fact, it has much broader applications that can extend to problems we have deemed unsolvable before.

What is gravitational lensing?

In 2017, a research group from Stanford University demonstrated the effectiveness of machine learning algorithms by using a neural network to study images of strong gravitational lensing.

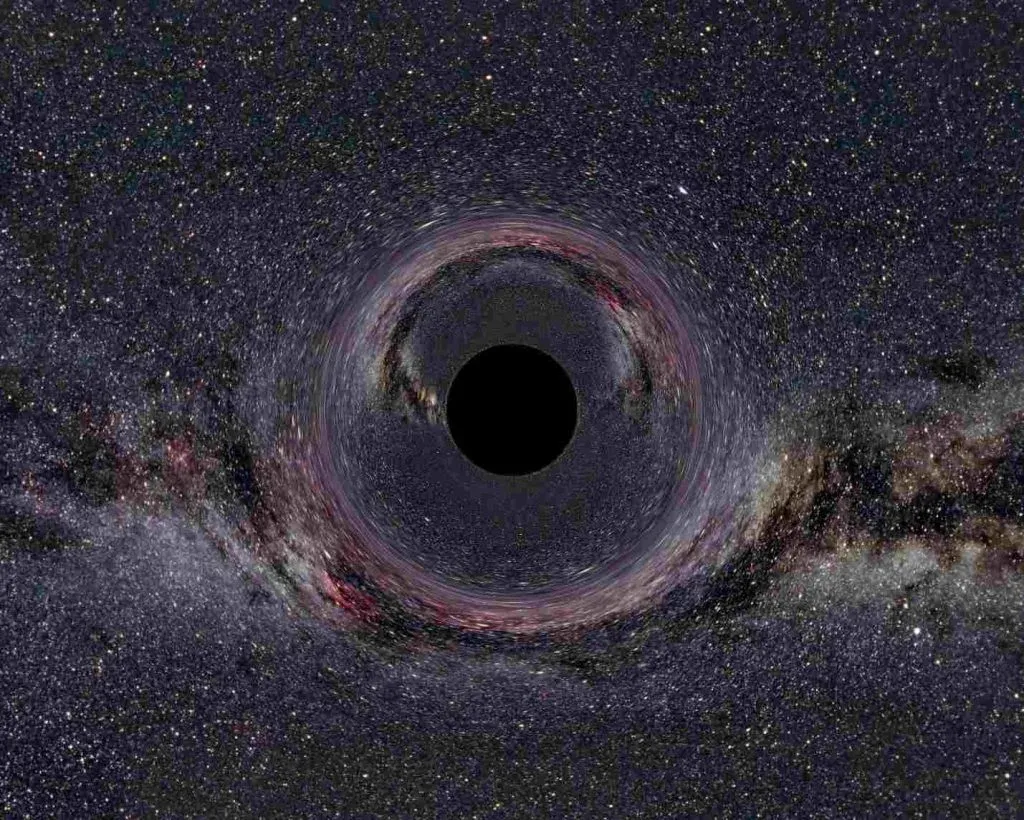

Gravitational lensing is an effect where the strong gravitational field around massive objects (e.g. a cluster of galaxies) can bend light and produce distorted images. It is one of the major predictions of Einstein’s General Theory of Relativity. That’s all well and good, but you might be wondering, why is it useful to study this effect?

Well, the thing you need to understand is that regular matter is not the only source of gravity. Scientists are proposing that there is “an invisible matter”, also known as dark matter, that constitutes most of the universe. However, we are unable to observe it directly (hence, the name) and gravitational lensing is one way to “sense” its influence and quantify it.

Previously, this type of analysis was a tedious process that involved comparing actual images of lenses with a large number of computer simulations of mathematical lensing models. This could take weeks to months for a single lens. Now that’s what I would call an inefficient method.

But with the help of neural networks, the researchers were able to do the same analysis in just a few seconds (and, in principle, on a cell phone’s microchip), which they demonstrated using real images from NASA’s Hubble Space Telescope. That’s certainly impressive!

Overall, the ability to sift through large amounts of data and perform complex analyses very quickly and in a fully automated fashion could transform astrophysics in a way that is much needed for future sky surveys. And those will look deeper into the universe—and produce more data than ever before.

What are the current uses of machine learning?

Now that we know how powerful machine learning can be, it's inevitable to ask ourselves: Has machine learning in Astronomy been deployed for something useful already?

The answer is… kind of. The truth is that the application of machine learning in astronomy is very much a novel technique. Although astronomers have long used computational techniques, such as simulations, to aid them in research, ML is a different kind of beast.

Still, there are some examples of the use of ML in real life.

Let’s start with the easiest one. Images obtained from telescopes often contain “noise”. What we consider as noise are any random fluctuations not related to the observations. For example, wind and the structure of the atmosphere can affect the image produced by a telescope on the ground as the air gets in the way. That is the reason we send some telescopes to space – to eliminate the influence of Earth’s atmosphere. But how can you clear the noise produced by these factors? Via machine learning algorithm called a Generative Adversarial Network or GAN.

GANs consist of two elements – a neural network that tries to generate objects and another one (a “discriminator”) that tries to guess whether the object is real or fake-generated. This is an extremely common and successful technique of removing noise, already dominating the self-driving car industry. In astronomy, it's very important to have as clear of an image as possible. That's why this technique is getting widely adopted.

Another example of AI comes from NASA.

However, this time it has non-space applications. I am talking about wildfire and flood detection. NASA has trained machines to recognize the smoke from wildfires using satellite images. The goal? To deploy hundreds of small satellites, all equipped with machine-learning algorithms embedded within sensors. With such a capability, the sensors could identify wildfires and send the data back to Earth in real-time, providing firefighters and others with up-to-date information that could dramatically improve firefighting efforts.

Is there anything else?

Yes - NASA's research on the important application of machine learning in probe landings. One technique for space exploration is to send probes to land on asteroids, gather material and ship it back to Earth. Currently, in order to choose a suitable landing spot, the probe must take pictures of the asteroid from every angle, send them back to Earth, then scientists analyze the images manually and give the probe instructions on what to do.

This elaborate process is not only complex but also rather limiting for a number of reasons. First of all, it is really demanding for the people working on the project. Second of all, you should keep in mind that these probes may be a huge distance away from home. Therefore, the signal carrying the commands may need to travel for minutes or even hours to reach it, which makes it impossible to fine-tune. That is why NASA is trying to cut this “informational umbilical cord” and enable the probe to recognize the 3D structure of the asteroid and choose a landing site on its own. And the way to achieve it is by using neural networks.

What obstacles and limitations lie ahead for machine learning in Astronomy?

If machine learning is so powerful why has it taken so long for it to be applied? Well, one of the reasons is that in order to train a machine learning algorithm you need a lot of labeled and processed data. Until recently, there just wasn’t enough data of some of the exotic astronomical events for a computer to study. It should also be mentioned that neural networks are a type of black box – we don’t have a deep understanding of how they work and make sense of things. Therefore, scientists are understandably nervous about using tools without fully understanding how they work.

While we at 365 Data Science are very excited about all ML developments, we should note that it comes with certain limitations.

Many take for granted that neural networks have much higher accuracy and little to no bias. Though that may be true in general, it is extremely important for researchers to understand that the input (or training data) they feed to the algorithm can affect the output in a negative way. AI is learning from the training set. Therefore, any biases, intentionally or unintentionally incorporated in the initial data, may persist in the algorithm.

For instance, if we think there are only 3 types of galaxies, a supervised learning algorithm would end up believing there are only 3 types of galaxies. Thus, even though the computer itself doesn’t add additional bias, it can still end up reflecting our own. That is to say, we may teach the computer to think in a biased way. It also follows that ML might not be able to identify some revolutionary new model.

Those factors are not game-changing. Nevertheless, scientists using this tool need to take these into account.

So, what comes next for machine learning?

The data we generate increasingly shapes the world we live in. So, it is essential that we introduce data processing techniques (such as machine learning) in every aspect of science. The more researchers start to use machine learning, the more demand there will be for graduates with experience in it. Machine learning is a hot topic even today but, in the future, it is only going to grow. And we’re yet to see what milestones we’ll achieve using AI and ML and how they will transform our lives.

Master the Machine Learning Process A-Z to remain competitive in today's job market.