Many people see machine learning as a path to artificial intelligence, but for a statistician or a businessman, it can also be a powerful tool allowing the achievement of unprecedented predictive results.

Why is Machine Learning so Important?

Before we start learning, we would like to spend a few minutes emphasizing WHY machine learning is so important.

Everyone knows about artificial intelligence or AI in short. Usually, when we hear AI, we imagine robots going around, performing the same tasks as humans. But we have to understand that, while some tasks are easy, others are harder, and we are a long way from having a human-like robot.

Machine learning, however, is very real and is already here. It can be considered a part of AI, as most of what we imagine when we think about an AI is machine learning based.

In the past, we believed these robots of the future would need to learn everything from us. But the human brain is sophisticated, and not all actions and activities it coordinates can be easily described. Arthur Samuel, in 1959, came up with the brilliant idea that we don’t need to teach computers, but we should rather make them learn on their own. Samuel also coined the term “machine learning”, and since then, when we talk about a machine learning process, we refer to the ability of computers to learn autonomously.

What are the applications of Machine Learning?

While preparing the contents of this post, I wrote down examples with no further explanation, presuming everyone is familiar with them. And then I thought: do people know these are examples of machine learning?

Let’s consider a few.

Natural language processing, such as translation. If you thought Google Translate is a really good dictionary, think again. Oxford and Cambridge are dictionaries that are constantly improved. Google Translate is essentially a set of machine learning algorithms. Google doesn’t need to update Google Translate; it is updated automatically based on the usage of different words.

Oh, wow. What else?

While still on the topic, Siri, Alexa, Cortana, and recently Google’s Assistant are all instances of speech recognition and synthesis. There are technologies that allow these assistants to recognize or pronounce words they have never heard before. It is incredible what they can do now, but they’ll be much more impressive in the near future!

And?

SPAM filtering. Unimpressive, but it is noteworthy that SPAM no longer follows a set of rules. It has learned on its own what is SPAM and what isn’t.

Recommendation systems. Netflix, Amazon, Facebook. Everything that is recommended to you depends on your search activity, likes, previous behavior, and so on. It is impossible for a person to come up with a recommendation that will suit you as well as these websites do. Most important, they do that across platforms, across devices, and across apps. While some people consider it intrusive, usually, that data is not processed by humans. Often, it is so complicated that humans cannot grasp it. Machines, however, match sellers with buyers, movies with prospective viewers, photos with people who want to see them. This has improved our lives significantly. If somebody annoys you, you won’t see that person popping up in your Facebook feed. Boring movies rarely make their way into your Netflix account. Amazon is offering you products before you know you need them.

Speaking of which, Amazon has such amazing machine learning algorithms in place they can predict with high certainty what you’ll buy and when you’ll buy it. So, what do they do with that information? They ship the product to the nearest warehouse, so you can order it and receive it on the same day. Incredible!

Machine Learning for Finance

Next on our list is financial trading. Trading involves random behavior, ever-changing data, all types of factors from political to judicial that are far away from traditional finance. While financiers cannot predict much of that behavior, machine learning algorithms take care of that and respond to changes in the market faster than a human can ever imagine.

These are all business implementations, but there are even more. You can predict if an employee will stay with your company or leave or you can decide if a customer is worth your time - if they’ll likely buy from a competitor or not buy at all. You can optimize processes, predict sales, discover hidden opportunities. Machine learning opens a whole new world of opportunities, which is a dream come true for the people working in a company’s strategy department. Anyhow, these are uses already here. Then we have the next level, like autonomous vehicles.

Machine Learning Algorithms

Self-driving cars were science fiction until recent years. Well, not anymore. Millions, if not billions, of miles have been driven by autonomous vehicles. How did that happen? Not by a set of rules. It was rather a set of machine learning algorithms that made cars learn how to drive extremely safely and efficiently.

We can go on for hours, but I believe you got the gist of: “Why machine learning”.

So, for you, it is not a question of why, but how.

That’s what our Machine Learning course in Python is tackling. One of the most important skills for a thriving data science career - how to create machine learning algorithms!

How to Create a Machine Learning Algorithm

Creating a machine learning algorithm ultimately means building a model that outputs correct information, given that we’ve provided input data.

For now, think of this model as a black box. We feed input, and it delivers an output. For instance, we may want to create a model that predicts the weather tomorrow, given meteorological information for the past few days. The input we’ll feed to the model could be metrics, such as temperature, humidity, and precipitation. The output we will obtain would be the weather forecast for tomorrow.

Now, before we get comfortable and confident about the model’s output, we must train the model. Training is a central concept in machine learning, as this is the process through which the model learns how to make sense of the input data.

Once we have trained our model, we can simply feed it with data and obtain an output.

How to Train a Machine Learning Algorithm

The basic logic behind training an algorithm involves four ingredients:

- data

- model

- objective function

- and an optimization algorithm

Let’s explore each of them.

First, we must prepare a certain amount of data to train with.

Usually, this is historical data, which is readily available.

Second, we need a model.

The simplest model we can train is a linear model. In the weather forecast example, that would mean to find some coefficients, multiply each variable with them, and sum everything to get the output. As we will see later, though, the linear model is just the tip of the iceberg. Stepping on the linear model, deep machine learning lets us create complicated non-linear models. They usually fit the data much better than a simple linear relationship.

The third ingredient is the objective function.

So far, we took data, fed it to the model, and obtained an output. Of course, we want this output to be as close to reality as possible. That’s where the objective function comes in. It estimates how correct the model’s outputs are, on average. The entire machine learning framework boils down to optimizing this function. For example, if our function is measuring the prediction error of the model, we would want to minimize this error or, in other words, minimize the objective function.

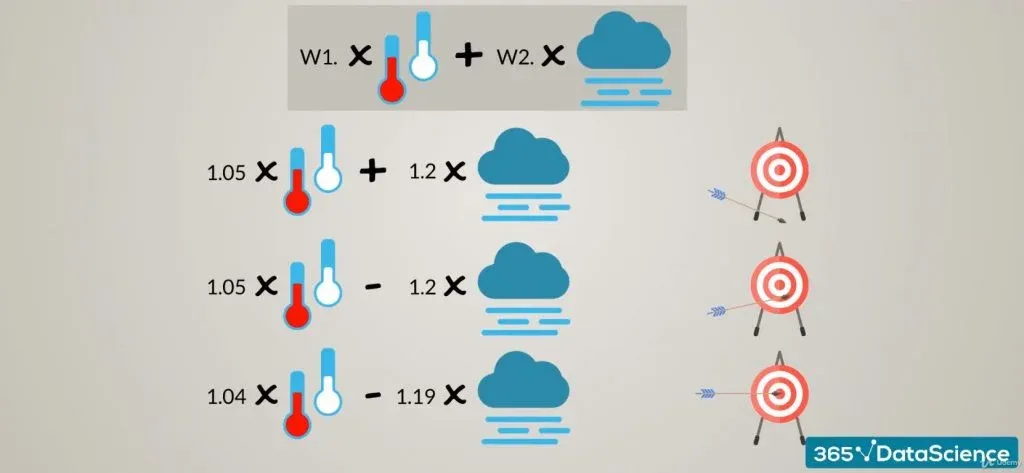

Our final ingredient is the optimization algorithm. It consists of the mechanics through which we vary the parameters of the model to optimize the objective function. For instance, if our weather forecast model is:

Weather tomorrow equals: W1 times temperature, plus W2 times humidity, the optimization algorithm may go through values like:

W1 and W2 are the parameters that will change. For each set of parameters, we would calculate the objective function. Then, we would choose the model with the highest predictive power. How do we know which one is the best? Well, it would be the one with an optimal objective function, wouldn’t it? Alright. Great!

Did you notice we said four ingredients, instead of saying four steps? This is intentional, as the machine learning process is iterative. We feed data into the model and compare the accuracy through the objective function. Then we vary the model’s parameters and repeat the operation. When we reach a point after which we can no longer optimize, or we don’t need to, we would stop, since we would have found a good enough solution to our problem. Sounds exciting? Well, it definitely is!

So dive deeper and learn more with The Machine Learning Algorithms A-Z course.