Convolutional Neural Networks with TensorFlow in Python

Master Convolutional Neural Networks: Building advanced neural network models with TensorFlow

$99.00

Lifetime access

What you get:

- 4 hours of content

- 24 Downloadable resources

- World-class instructor

- Closed captions

- Q&A support

- Future course updates

- Course exam

- Certificate of achievement

Convolutional Neural Networks with TensorFlow in Python

$99.00

Lifetime access

What you get:

- 4 hours of content

- 24 Downloadable resources

- World-class instructor

- Closed captions

- Q&A support

- Future course updates

- Course exam

- Certificate of achievement

$99.00

Lifetime access

$99.00

Lifetime access

What you get:

- 4 hours of content

- 24 Downloadable resources

- World-class instructor

- Closed captions

- Q&A support

- Future course updates

- Course exam

- Certificate of achievement

What You Learn

- Master convolutional neural networks (CNNs) and computer vision to develop cutting-edge visual recognition systems

- Improve your career prospects by acquiring advanced deep learning skills with TensorFlow

- Adopt proven optimization techniques used to optimize the performance of neural networks

- Master TensorBoard, TensorFlow’s indispensable visualization tool

- Apply TensorFlow to solve complex real-world computer vision challenges

- Strategically position your profile to capitalize on the ever-growing number of deep learning and AI development opportunities in the job market

Top Choice of Leading Companies Worldwide

Industry leaders and professionals globally rely on this top-rated course to enhance their skills.

Course Description

This course offers a deep dive into an advanced neural network construction – convolutional neural networks. First, we explain the concept of image kernels, and how it relates to CNNs. Then, you will get familiar with the CNN itself, its building blocks, and what makes this kind of network necessary for computer vision. You’ll apply the theoretical bit to the MNIST example using TensorFlow, and understand how to track and visualize useful metrics using TensorBoard in a dedicated practical section. Later in the course, you’ll be introduced to a handful of techniques to improve the performance of neural networks, and a huge real-world practical project for classifying fashion item pictures. Finally, we will cap it all off with an intriguing look through the history of the most influential CNN architectures.

Learn for Free

1.1 What does the course cover?

6 min

1.2 Why CNNs?

4 min

2.1 Introduction to image kernels

3 min

2.2 How do image transformations work?

7 min

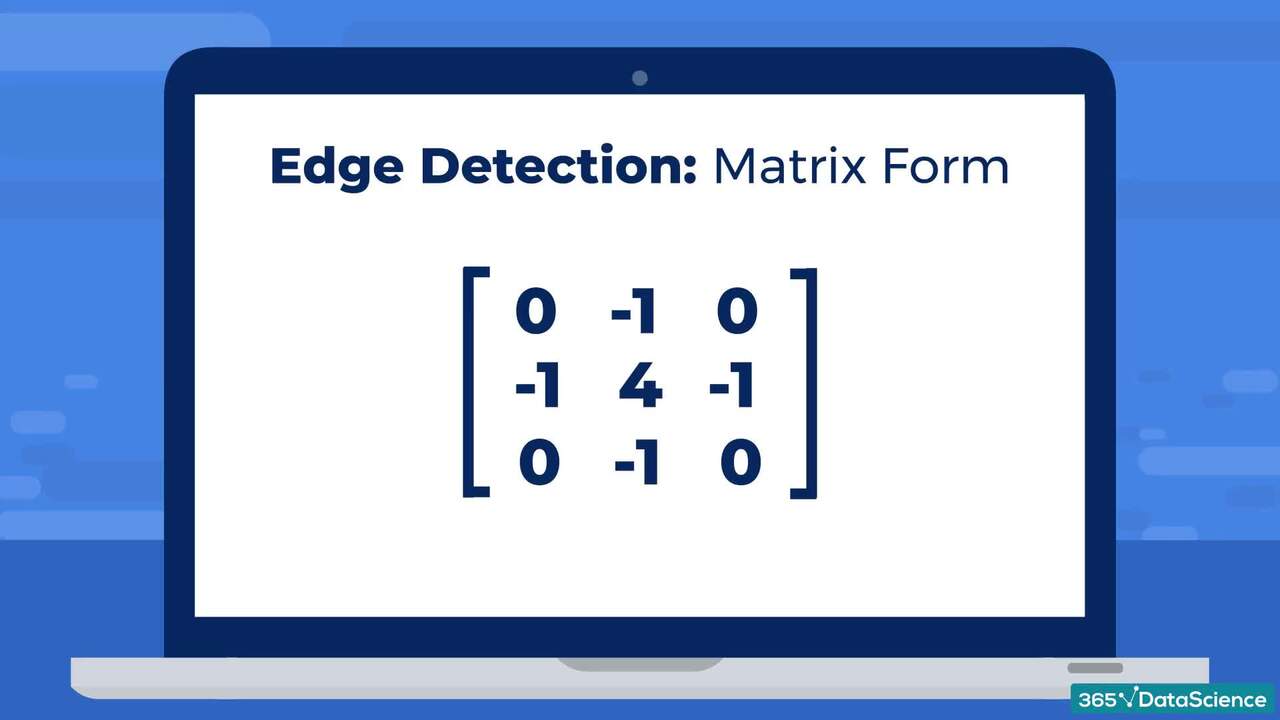

2.3 Kernels as matrices

2 min

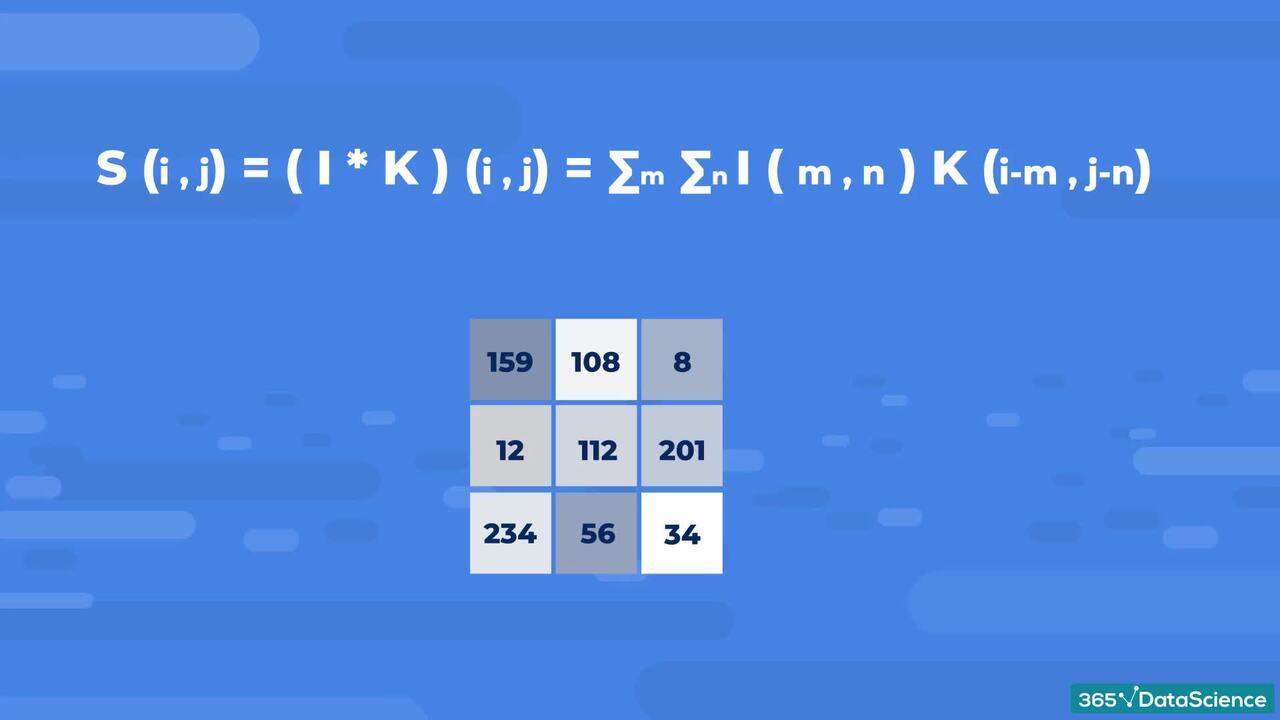

2.4 Convolution - applying kernels

2 min

Interactive Exercises

Practice what you've learned with coding tasks, flashcards, fill in the blanks, multiple choice, and other fun exercises.

Practice what you've learned with coding tasks, flashcards, fill in the blanks, multiple choice, and other fun exercises.

Curriculum

Topics

Course Requirements

- You need to complete an introduction to Python before taking this course

- Basic skills in statistics, probability, and linear algebra are required

- It is highly recommended to take the Machine Learning in Python and Deep Learning with TensorFlow courses first

- You will need to install the Anaconda package, which includes Jupyter Notebook

Who Should Take This Course?

Level of difficulty: Advanced

- Aspiring data scientists, ML engineers, and AI developers

- Existing data scientists, ML engineers, and AI developers who want to improve their technical skills

Exams and Certification

A 365 Data Science Course Certificate is an excellent addition to your LinkedIn profile—demonstrating your expertise and willingness to go the extra mile to accomplish your goals.

Meet Your Instructor

Nikola Pulev is a Natural Sciences graduate from the University of Cambridge (UK) turned data science practitioner and a course instructor at 365 Data Science. Nikola has a strong passion for mathematics, physics, and programming. Over the years, he has taken part in multiple national and international competitions, where he has won numerous awards. One of Nikola’s most notable achievements so far is his silver medal from the International Physics Olympiad.

What Our Learners Say

365 Data Science Is Featured at

Our top-rated courses are trusted by business worldwide.